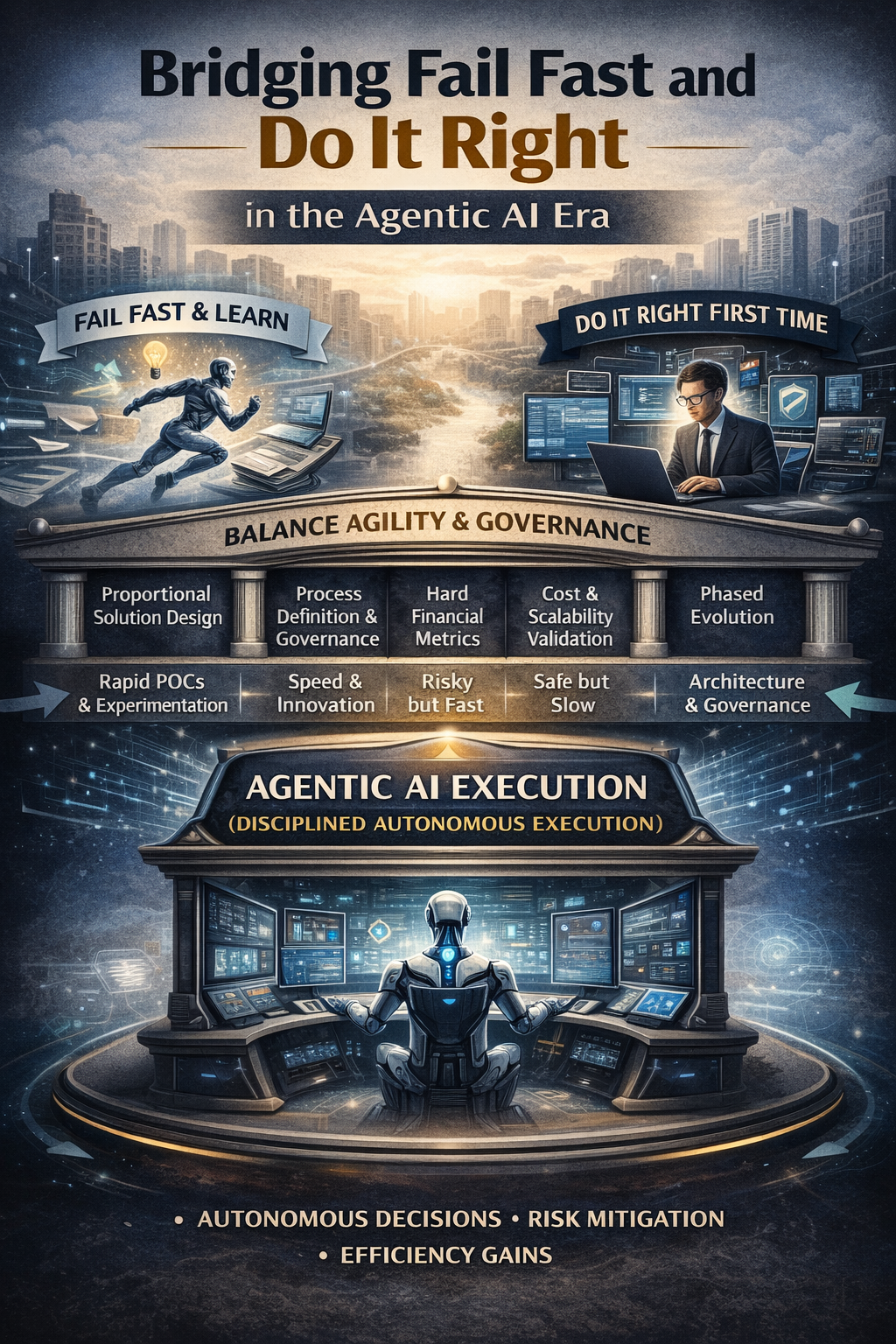

Currently, enterprise analytics is experiencing its most significant transformation to date. From an era of dashboards that offered visibility and decision intelligence that enhanced human judgment, we are transitioning to the domain of agentic AI systems that can autonomously execute bounded decisions. Stakes for enterprise architecture and governance have never been higher as the emphasis transitions from content generation to actual work delegation. In the era of Agentic AI, architectural errors no longer result in dormant dashboards; rather, they can initiate autonomous operational actions. In order to effectively operationalize AI autonomy in a safe and responsible manner, organizations must acknowledge that neither extremity is sufficient in isolation. We must exercise dexterity in our operations across these value systems.

Tale of Two Value Systems

A profoundly different approach to problem-solving, timelines, and risk is the source of the tension between these two ecosystems.

The “Fail Fast & Learn” Ecosystem (Innovation & Agility) is a culture that prioritizes the spirit of getting things done with urgency. It approaches problem-solving through rapid Proofs of Concept (POCs) to expedite the resolution of solutions through iterative deployment.

• Mindset: A “quick & dirty” development to test hypotheses, thriving on speed.

• Horizon: “Here and now” is the primary focus of problem statements and technological visions.

• The Trade-off: The assumption is that external (core) technology providers will always be ahead, resulting in a tendency to place less emphasis on the development of profound internal competency. In the end, structural quality is compromised in favor of time and cost.

The Ecosystem of “Do It Right First Time” (Stability and Architecture) Contrary to ‘fail fast’, the environment that is responsible for ‘keeping the lights on’ prioritizes an engineering mindset that is founded on rigorous research and analytic rigor in order to identify the most effective technologies and practices.

• Mindset: It emphasizes the development of profound techno-functional competencies within the organization and is predicated on the framework of structured problem definition.

• The Horizon: Processing and technology are assessed over an extended period of time.

• The Trade-off: Rapid deployment velocity is sacrificed in favor of quality and cost.

The Agentic AI Collision Course

When developing predictive models or dashboards, the potential consequences of a “fail fast” approach include a skewed recommendation or an unused dashboard—a risk that can be mitigated. As we transition into the Agentic AI era, the system no longer awaits a human to interpret a chart. Autonomous task execution is implemented.

By employing a solely “fail fast” methodology to autonomous agents, there is a risk of deploying systems with weak governance that are unpredictable, resulting in immediate operational or reputational damage. On the other hand, an inflexible “do it right first time” approach can lead to analysis paralysis, resulting in the enterprise missing out on the significant efficiency gains of AI.

We aim to develop an operational model that strikes a balance between architectural rigor and agility.

A pragmatic way to balance the value-systems

A structured framework is necessary to bridge these two value systems, particularly when evaluating ambitious Agentic AI proposals from external partners or internal innovation centers. The rigorous investigation of five dimensions is necessary for a balanced architecture:

- Proportional Solution Design Before deploying an LLM, determine whether agentic capabilities are truly necessary or if deterministic automation is sufficient. The tendency to overengineer is frequently the result of “fail fast” enthusiasm. Maintaining an engineering perspective guarantees proportional design, thereby preventing unnecessary complexity and inflated operating costs. Use of an LLM for straightforward invoice routing is an illustration of this, as opposed to deterministic optical character recognition (OCR).

- Process Definition and Governance Agentic systems necessitate explicit boundaries. Expected automation levels must be explicitly defined in the “To-Be” process, including the distinction between human-in-the-loop and straight-through processing. By establishing precise human intervention points and escalation criteria, design clarity, governance, and appropriate supervision are guaranteed, without suffocating the agent’s utility.

- Financial Metrics That Are Hard to Measure Innovation must ultimately contribute to the bottom line. Measurable key performance indicators (KPIs)—including automation percentages, Turnaround Time (TAT), and error or revision reduction—must be included in proposals. This allows for the objective validation of business impact that is necessary for finance and program management agencies.

- Validation of Cost and Scalability A rapid proof of concept (POC) may appear successful in a sandbox, but the architectural mindset must pose the question: what is the anticipated operating cost at current production volumes? Scalability is cross-validated against budgetary alignment to prevent the deployment of solutions that are technically remarkable but financially unfeasible.

- The Evolution in Phases A phased rollout is the most effective connection between stability and quickness. To capture immediate value, organizations should recommend commencing with rules-based deterministic automation and subsequently layering on AI augmentation as the system demonstrates its capabilities. This minimizes risk and facilitates the realization of incremental value.

The Way Ahead

A new operating discipline is required by agentic AI, which integrates architectural accountability with experimental agility. The safe unlocking of autonomous execution is guaranteed for organizations that institutionalize this balance. In the event that an individual leans too far toward either extremity, they will encounter difficulties, such as uncontrolled risk or stagnant innovation. The subsequent decade of enterprise data strategy will not be determined by the most exhaustive architecture documentation or the quickest proof-of-concept. The future is reserved for organizations that can integrate these distinct cultures, combining the CDO’s dynamism with the CIO’s requirement for governance. By adhering to a proportional design, a phased deployment approach, and explicit escalation paths, enterprises can effectively transition from content generation to autonomous work delegation. Agentic AI will not reward efficiency or governance alone; rather, it will reward disciplined autonomy.